So on of the most valuable networking features of VMware is setting up CDP information or LLDP (if using non cisco devices). We can see exactly which VMNIC is plugged into which port.

ESXi can receive and display CDP information within the client or web client, but this doesn’t work with HP switches which use LLDP, which you will see in the below examples for both vendors.

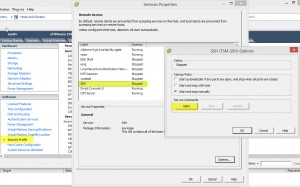

Below the environment is plugged into a HP switch and no CDP information is being displayed into the standard switch, however we can send CDP information from VMware ESXi to the HP switch. (But from a Cisco Switch the information does show)

So open up SSH and lets dive in.

For SSH, go to Configuration, Security Profile, SSH, start

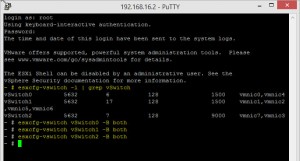

In the shell of ESXi,

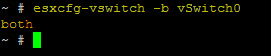

First off you need to know which vSwitches you have, which you can do in the GUI, but for those CLI lovers you can use (screenshot in point 2)

esxcfg-vswitch -l | grep vSwitch

1. You can see the current CDP information settings by using

esxcfg-vswitch vSwitch(No.) -b both

Note the lower case -b, this is important! its lower case to display the setting, and uppercase to set the setting.

2. To set the ESXi host to send and listen for CDP information;

esxcfg-vswitch vSwitch(No.) -B both

And your done, now down to the switch level.

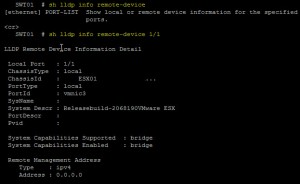

View information on HP Switches

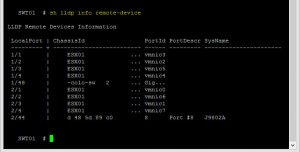

On a HP switch, they use “Link Layer Discovery Protocol” or LLDP. And the command to view this information is;

show lldp info remote-device

This command provides us enough summary information to see which switch port maps to a particular host and VMNIC

We can expand this command to specify a particular switch port and display more detailed information

show lldp info remote-device [switch/port]

Note: I am currently unsure why it doesn’t show the management address of the ESXi host for the above screenshot, as this VMNIC is used for Mgmt.

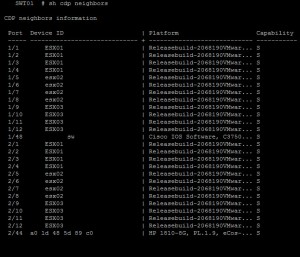

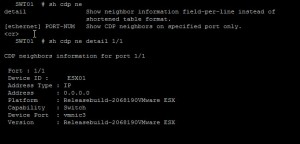

HP switches do somewhat use CDP and you can see CDP information using the commands;

show cdp neighbors

show cdp neighbors detail [switch/port]

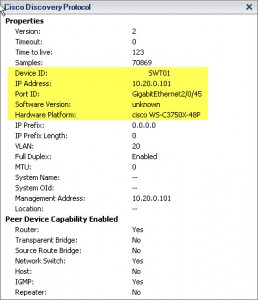

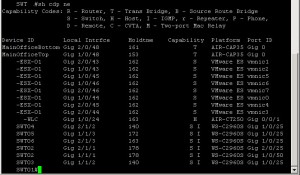

Viewing the information on Cisco Switches

Cisco switches by default send out CDP information on all ports, for security this should be disabled and only left running on the ports needed. Obviously once you’ve discovered which ports are in use for switches and ESXi hosts.

So by default you should be able to see something like the following;

And on the Cisco Switch itself;

show cdp neighbors show cdp neighbors detail

Heres the official VMware links on how to configure, KB ONE, KB TWO

Ciao for now!