This follow on blog post, diving into how we created the vRA integration with DMS comes from Katherine Skilling, who kindly offered to guest spot and provide the additional content regarding the work we have done internally. You can find her details at the end of this blog post.

In an earlier blog post Dean covered the use of vRA (vRealize Automation) Custom Resources in the context of using vRA to create Databases in DMS (Data Management for VMware Tanzu) and how to create custom day 2 actions. In this post, we will look at how we created the Dynamic Types in vRO (vRealize Orchestrator) to facilitate the creation of the custom resources in vRA.

Introduction – What are Dynamic Types?

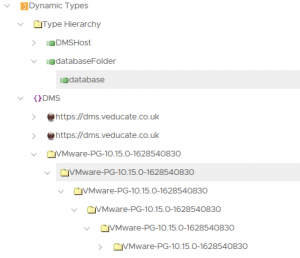

Dynamic Types are custom objects in vRO created to extend the schema so that you can create and manage 3rd party objects within vRO. Each type has a definition that contains the object’s properties as well as its relationship within the overall namespace which is the top level in the Dynamic Types hierarchy.

As we started working on our use case, we looked at a tool (published on VMware Code) that would generate Dynamic Types based on an API Swagger specification. The problem we encountered was the tool was quite complex and our API Swagger for Data Management for VMware Tanzu (DMS) didn’t seem to quite fit with the expected format.

This meant we ended up with lots of orphaned entries after running the tool and hoping it would do all the heavy lifting for us. After spending some time investigating and troubleshooting it become clear we didn’t understand Dynamic Types, and how they are created sufficiently well enough, to be able to resolve all our issues. Instead, we decided to scale back on our plans and focus on just the database object we really needed initially. We could use it as a learning exercise, and then revisit the generator tool later once we had a more solid foundation.

To get a better understanding of how Dynamic Types work I recommend this blog from Mike Bombard. He walks through a theoretical example using a zoo and animals to show you how objects are related, as well as how to create the required workflows. I like this particular blog as you don’t need to consider how you are going to get values from a 3rd party system, so its easy to follow along and see the places where you would be making an external connection to retrieve data. It also helped me to understand the relationships between objects without getting mixed up in the properties provided by technical objects.

After reading Mike’s post I realised that we only had a single object for our use case, a database within DMS. We didn’t have any other objects related to it, it didn’t have a parent object and it didn’t have any children. So, when we created a Dynamic Type we would need to generate a placeholder object to act as the parent for the database. I choose to name this databaseFolder just for simplicity and because I’m a visual person and like to organise things inside folders. These databaseFolders would not exist in DMS, they are just an object I created within vRO, they have no real purpose or properties to them other than that the DMS databases are their children in the Dynamic Types inventory.

Stub Workflows

When you define a new Dynamic Type, you must create or associate four workflows to it, which are known as stubs:

- Find By Id

- Find All

- Has Children in Relation

- Find Relation

These workflows tell vRO how it can find the Dynamic Type and what its position is in the hierarchy in relation to other types. You can create one set of workflows to share across all Types or you can create a set of workflows per Type. For our use case we only needed one set of the workflows, so we created our code such that the workflows would be dedicated to just the database and databaseFolder objects.

It’s important to know that vRO will run these workflows automatically when administrators browse the vRO inventory, or when using Custom Resources within vRA. They are not started manually by administrators, if you do test them by running them manually you may struggle to populate the input values correctly.

I’ll give you a bit of background to the different workflows next.

Find By Id Workflow

This workflow is automatically run whenever vRO needs to locate a particular instance of a Dynamic Type, such as when used with Custom Resources in vRA for self-service provisioning. The workflow follows these high-level steps:

- Check if the object being processed is the parent object (databaseFolder) or the child object (database).

- If it is a databaseFolder creates a new Dynamic Type for a databaseFolder.

- If it is a database perform the activity required to locate the object using its id value, in our case, this is a REST API call to DMS to retrieve a single database.

- Perform any activities required to create the object and set its properties, in our case, this is extracting the database details from the REST API call results as DMS returns values such as the id and the name in a JSON object formatted as a string.

Find All Workflow

This workflow is automatically run whenever vRO needs to locate all instances of a Dynamic Type, such as when the Dynamic Types namespace is browsed in the vRO client when it is called as a sub workflow of the Find Relation workflow. The workflow follows these high-level steps:

- Check if the object being processed is the parent object (databaseFolder) or the child object (database).

- If it is a databaseFolder creates a new Dynamic Type for a databaseFolder.

- If it is a database perform the activity required to locate all instances of the objects, in our case, this is a REST API call to DMS to retrieve all databases.

- Perform any activities required to loop through each of the instances found. For each instance create an object and set its properties. In our case, this is extracting all of the database details from the REST API call results, looping through each one, and extracting values such as the id and the name.

Has Children in Relation Workflow

This workflow is used by vRO to determine whether it should expand the hierarchy when an object is selected in the Dynamic Types namespace within the vRO client. If an object has children objects these would be displayed underneath it in the namespace, in the same way as the databases are displayed under the databaseFolders. The workflow follows these high-level steps:

- Check if the object being processed is the parent object (databaseFolder) or the child object (database) by checking its parentType and relationName values which are provided as workflow inputs.

- If it is a databaseFolder call the Find Relation workflow to retrieve all related objects.

- If it is any other object type set the result to false to indicate that there are no child objects related to the selected object to display in the hierarchy.

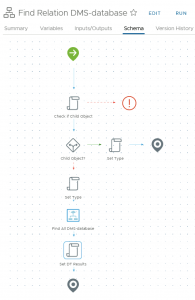

Find Relation Workflow

This workflow is used by vRO when an object is selected in the Dynamic Types namespace within the vRO client. If an object has children objects these would be displayed underneath it in the namespace, in the same way as the databases are displayed under the databaseFolders. vRO automatically runs this workflow each time the Dynamic Types namespace is browsed by an administrator to find any related objects it needs to display. The workflow follows these high-level steps:

- Check if the object being processed is the parent object (databaseFolder) or the child object (database) by checking its parentType and relationName values which are provided as workflow inputs.

- If it is a databaseFolder and the relationName value is “namespace-children” which is a special value assigned to the very top level in the selected namespace, then create a new Dynamic Type for a databaseFolder.

- If it is a database set the type to DMS.database and then call the Find All workflow to retrieve the Dynamic Type objects for all database instances

Creating a Dynamic Type

Defining a Namespace

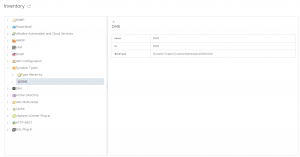

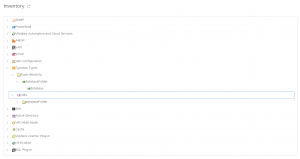

The first stage in creating our Dynamic Type is to define a new Namespace. The Namespace will hold entries for all types we create, as well as any object created, so in our case we would have entries for the databaseFolders that we created as placeholders in vRO and any databases that exist within DMS. To define a Namespace, we run the Define Namespace workflow located in the “Library > Dynamic Types > Configuration” folder. The workflow has only a single input which is the name we want to assign to our namespace. This namespace will be used within future workflows and will be visible within the vRO inventory. I choose DMS for the namespace since this described its purpose and was flexible enough if we choose to create multiple Dynamic Types in the future. After running the workflow, you can see your namespace in the vRO inventory as a purple icon, it will be empty initially.

Define Types

The second stage is to define the different Dynamic Types we want to use within vRO. For our use case we have two, databaseFolder which is our placeholder parent, and database which represents a database created within DMS. With two types we needed to run the workflow twice to define each type individually.

The workflow to define new types is Define Type and is again located in the “Library > Dynamic Types > Configuration” folder.

This workflow has several inputs:

- Namespace – Here we select the namespace we defined in the previous workflow

- Type Name – The name we want to use for our Dynamic Type, I used database and databaseFolder for the two workflow runs.

- Icon Resource – An image stored in vRO as a Resource Element that will be used as the icon for the Dynamic Type. You can select custom images here, I simply used the existing item-16×16.png for the database object and folder-16×16.png for the databaseFolder object.

- Custom Properties (optional) – If you want to define any properties for your object you can list them here. Dynamic Types will always have a name and id property, so these do not need to be defined. For the database, I added some properties (version, status, role, databaseInstanceName, dbType, environmentId, clusterName, vCenter, databaseId) as I thought these values may be useful to store on the objects for future use.

As well as the inputs you also must decide if you want to generate Workflow Stubs for the type or not. This ties into the four stub workflows I outlined earlier, if you answer yes to this question by selecting the box then it will prompt you for a Category (aka the Folder in the vRO inventory) in which to create the workflows. If you answer no, then you are prompted to select each of the four workflows to be used.

When defining the database object Dynamic Type I selected to generate the Workflow Stubs since I didn’t have any existing stub workflows to use. For the databaseFolder object Dynamic Type, I did not generate new folders, instead, I selected each of the four workflows created for when I defined the database type.

When the workflow has completed you will be able to see your new Dynamic Type objects listed in the vRO inventory under the Hierarchy. They will be listed as siblings, that is they will appear at the same level in the hierarchy as we have not yet defined a relationship between them.

Define Relationship

The final stage in the initial configuration of the Dynamic Types is to define a relationship between the different Dynamic Types you have defined. In our use case we only have two objects – database and databaseFolder, and therefore only need to define a single relationship.

The workflow we need this time is named, yep you guessed it Define Relationship, and this is located in the same folder as the other workflows we have been using.

The workflow needs three inputs:

- Parent Type – This is the parent object of our relationship, in our case, it is the databaseFolder Dynamic Type we defined.

- Child Type – This is the child object of our relationship: it will appear underneath the parent type in the hierarchy when expanded. In our case, it is the database Dynamic Type we defined.

- Relation Name – A string assigned as the name of the relationship. There is no mandatory naming format for this value, I choose to follow the examples I had seen in other blog posts and use the format parent type-child type, so I named it databaseFolder-database.

Now if you browse the vRO inventory you will see the Dynamic Types in the Hierarchy section with a parent-child relationship structure. Notice that if you expand the namespace then it shows the parent object with an arrow indicating it can be expanded to show child objects, however, no child objects exist yet as we have not instructed vRO on how to discover these. That will be our next step, to update the Stub workflows vRO generated for us to include the required REST API calls to query DMS for the data we need.

Updating the Stub Workflows

This is the final stage of the Dynamic Types configuration, and at this stage, the code you need could be very different from what I am going to describe. The code I used is based on how DMS works, and the API calls I needed to be able to locate all databases, or a single database. My hope is by walking through what I did it will help you be able to create the code you need.

Within our vRO instance, we have already set up a REST host to our DMS server and created a workflow to allow us to get a token from the DMS server to be able to log in and perform actions. We have wrapped all these activities together into a single workflow which we call at the start of each workflow that involves a REST call. I’m not going to go into the detail of this workflow in this blog post, it effectively just a couple of REST calls and a bit of data manipulation in a vRO script to retrieve the token value and pass it to DMS in a login API call to authenticate our user account. Feel free to download the final workflows and poke around in the code to see what we did.

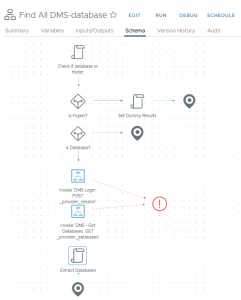

Find All DMS-database Workflow

This stub workflow as explained earlier makes an API call to DMS to retrieve all provisioned databases.

Input and Output parameters

Inputs: type A String value identifying the Dynamic Type Object being processed. Outputs: resultObjs An Array of DynamicTypes:DMS.database objects found by the REST API call.

The first thing our workflow does is examine the value of the “type” input parameter as it needs to determine if the Dynamic Type object that was viewed by the administrator, causing the workflow to start, is a database or a databaseFolder. As the type values have the format <namespace.obejctname> e.g. DMS.database, we need to split the value into two sections and just examine the second section of the results.

if(type.split(".")[1] == "databaseFolder") folder = true;

if(type.split(".")[1] == "database") database = true;

Now we use a decision to determine whether we have a database or a folder object. If it is a folder, we don’t need to contact our DMS server as it’s just a placeholder object, so we simply create a new instance of our DMS.databaseFolder Dynamic Type and assign the value to our output parameter resultObjs which is an array of Dynamic Type objects.

resultObjs = new Array();

var object = DynamicTypesManager.makeObject("DMS", "databaseFolder", "databaseFolder", "databaseFolder");

resultObjs.push(object);

That’s all the steps needed if the type is a databaseFolder, so we end the workflow.

If our type was not a folder, we need to perform a second check to ensure it is a database object and not some other random value, since our previous decision only checked if the value of our folder variable was true. If folder is false that does not automatically mean the value of our database variable is true, it just means our type value did not include the phrase databaseFolder.

This is something I learned during testing while troubleshooting some unexpected behaviour. I realised that neither the database nor folder variables were evaluating to true, and my code was not set up to handle such a scenario. To resolve this the second check was introduced to evaluate if the value of the database variable is true.

If the value of the database variable is false then we have an unexpected condition and do not need to take any action, so we simply end the workflow. We do not create an object this time as in our use case we only have database and databaseFolder objects. We have already covered the creation of the databaseFolder in our previous decision element, now we know we do not have a folder or a database object, so we do not want to take any additional action. In our workflows we have only included basic error and exception handling, in the future we could revisit the decision to simply end the workflow and include some additional error handling instead.

If the value of the database variable is true, then we know we have a database object and want to contact our DMS server to retrieve additional information about it. Therefore, we call two workflows containing REST operations, first, we call our login workflow to authenticate to the DMS server and retrieve a token. Next, we call a workflow that makes a REST API call to retrieve all databases from the DMS server with the token we retrieved included in the header of the REST API call as proof of our authentication.

Finally, we use a scriptable task to manipulate the output of the REST API call which returned all databases to extract the information we are interested in.

//REST Operations in vRO return all content as a string value, so we have a JSON structure flattened into a single string. We want to convert it back into a JSON object so that we can easily navigate its structure using the dot (.) notation.

var contentAsJSON = JSON.parse(contentAsString);

//Now we check that we actually got some results back from the REST call otherwise we can't navigate the JSON as it will be empty

if(contentLength !== 0 ){

var typename = type.split(".")[1];

var namespace = type.split(".")[0];

//In vRO we must initialise arrays before using them, we do this outside of our loop so that we don't overwrite our results each time

resultObjs = new Array();

//We now loop through the JSON results in the content field where the database details are held and capture the values we want to store in our Dynamic Type instance properties or set as its id and name for example.

for each (var obj in contentAsJSON.content){

var databaseId = obj.id.toString();

var databaseInstanceName= obj.instanceName.toString();

//Once we have the id and name values, we create a new Dynamic Type object in our DMS namespace, with the type of DMS.Database and assign it the name and id values we got from the DMS server. We do this inside our loop so if there are multiple databases each one is created as a separate object.

var dynobj = DynamicTypesManager.makeObject(namespace , typename ,databaseId , databaseInstanceName);

var status = obj.status.toString();

var version = obj.version.toString();

var dbType = obj.dbType.toString();

var role = obj.role.toString();

var environmentId = obj.environment.environmentId.toString();

var vCenter = obj.environment.vcHost.toString();

var clusterName = obj.environment.vcClusterName.toString();

//With our additional values captured we can set the custom properties on our Dynamic Type object using the setProperty method

dynobj.setProperty("databaseId",databaseId);

dynobj.setProperty("databaseInstanceName",databaseInstanceName);

dynobj.setProperty("status",status);

dynobj.setProperty("version", version);

dynobj.setProperty("dbType", dbType);

dynobj.setProperty("role", role);

dynobj.setProperty("environmentId", environmentId);

dynobj.setProperty("vCenter", vCenter);

dynobj.setProperty("clusterName", clusterName);

//Finally we need to push the object we created into our array so that if we have multiple databases we capture all of them in a single output parameter

resultObjs.push(dynobj);

}

}

//If we got no results back from the REST API call let's just log it, we aren't going to throw an exception for now.

else{

System.log("no content found for REST API call to get all databases")

}

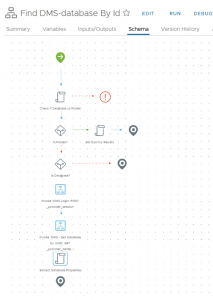

Find DMS-database By Id Workflow

This workflow is very similar to the Find All DMS Database workflows.

Input and Output parameters

Inputs: type A String value identifying the Dynamic Type Object being processed. id A string representing the unique id of the database to passed to DMS in the REST API call. Outputs: resultObj A DynamicTypes:DMS.database object found by the REST API call.

We start with the same steps and code to evaluate if we are dealing with a database, a databaseFolder or some other unknown object. If it is a databaseFolder we create a new placeholder object, if it is an unknown object, we exit the workflow. If it is a database, we again make a REST API call to our DMS server to log and get a token. We then make a second REST API call to perform a filtered search for a single database based on the id value supplied as an input parameter.

As before we then finish off the workflow with a scriptable task to manipulate the results of the REST API call and retrieve the values we need. The difference this time is that we know DMS will only return a single database as it will not allow multiple databases to be created with the same id value, each database has a unique id value. Therefore, we don’t need to loop through the results and can just assign the values to a single Dynamic Type object.

var contentAsJSON = JSON.parse(contentAsString);

if(contentLength !== 0 ){

var typename = type.split(".")[1];

var namespace = type.split(".")[0];

var databaseInstanceName= contentAsJSON.instanceName.toString();

var dynObj = DynamicTypesManager.makeObject(namespace , typename , id , databaseInstanceName);

var status = contentAsJSON.status.toString();

dynObj.setProperty("status",status );

}

resultObj = dynObj;

Find Relation DMS-database Workflow

This workflow is very different from the previous two, it’s about instructing vRO of the relationship between our objects when the namespace is browsed by an administrator. It controls whether there are child objects to display in the inventory under a Dynamic Type object.

Input and Output parameters

Inputs: parentType A String value identifying the parent of the Dynamic Type Object being processed. parentId A string representing the unique id of the parent Dynamic Type Object. relationName A String representing the relationship between the Parent and Child Dynamic Type Objects being processed. Outputs: resultObjs An Array of DynamicTypes:DynamicObject objects found by the REST API call.

As this workflow runs whenever an item in the Dynamic Types inventory is selected it needs to be able to identify it the object is a databaseFolder or a database and perform different actions based on the results.

The workflow starts with a scriptable task where we perform a check on the parentType and relationName input parameter values.

//Check if this is a database object by confirming the parentType value is databaseFolder, and the relationName is databaseFolder-database

if(parentType.split(".")[1] == "databaseFolder" && relationName == "databaseFolder - database") database = true;

If the values match what we are expecting for a database, then we set the database variable value to be true.

Next, we have a decision element where we check if our database variable value is true or false. What I am actually checking for here is to see if this is a child object database or if it’s some other object. If the value of database is false then it is not a database object and so we want to create a new instance of our placeholder object as we don’t need to contact our DMS server. We use a scriptable element to do this.

Note: In vRO 8 the relation name for the top level of the hierarchy is different from earlier versions that were used in the blog articles I referenced, this caused some confusion during testing. Notice the use of the namespace-children value for the relationName to identify a top level object rather than toplevel-DMS.databaseFolder, as in the provided example;

if(relationName == "namespace-children") {

resultObjs = new Array();

type = parentId;

var object = DynamicTypesManager.makeObject("DMS", "databaseFolder", "databaseFolder", "databaseFolder");

resultObjs.push(object);

}

If the value of database is true, then we know we have a database object so we use a scriptable task to set the value of the type parameter to DMS.database and then reuse our Find All DMS-database workflow to contact the DMS server and retrieve all databases. This populates the inventory with all known child database objects.

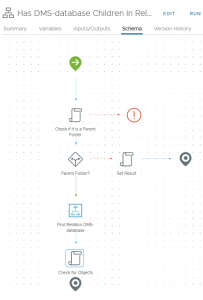

Has DMS-database Children in Relation

The final workflow is similar to the Find Relation workflow. This time it controls whether vRO provides us with an option to expand the hierarchy to view child objects. So Has Children in Relation controls whether vRO should expand the inventory and look for child objects and Find Relation populates the expanded inventory.

Input and Output parameters

Inputs: parentType A String value identifying the parent of the Dynamic Type Object being processed. parentId A string representing the unique id of the parent Dynamic Type Object. relationName A String representing the relationship between the Parent and Child Dynamic Type Objects being processed. Outputs: result A boolean value to indicate if the Dynamic Type Object being processed has Children defined in its relationship hierarchy.

The workflow starts with the same scriptable task as the Find Relation DMS-database Workflow to determine if this is a parent databaseFolder, this time we are using a variable named folder.

//Check if this is a folder or DT Object

if(parentType.split(".")[1] == "databaseFolder" && relationName == "databaseFolder - database") folder = true;

Next, we have a decision to check the value of the folder variable, if it is false then we know we haven’t got a databaseFolder object, so we don’t need to take any action. As we don’t have any other relationships defined for our Dynamic Types objects, we simply use a scriptable task to set the value of the output parameter result to false and end the workflow.

If the value of the folder variable is true then we know we have a databaseFolder object, and as we have defined a relationship that states it has children objects of type database, we need to take additional actions. To do this we reuse our Find Relation DMS-database workflow since this will check for and locate any children objects of a Dynamic Type.

Finally, we have a scriptable task to check for the results of the Find Relation DMS-database workflow. This is because while we know there is a relationship between databaseFolder and database Dynamic Types, we don’t actually know if there are any child objects being returned by the workflow. Therefore, in our scriptable task, we simply check if the value returned by the workflow is not null or zero in length meaning no databases were found and set the result output parameter value accordingly.

result = (resultObjs !== null && resultObjs.length > 0);

Testing the workflows

My approach to testing is to try and test the code in small blocks where possible as I create it, rather than testing everything at the end and having to work backwards through the code to find the root cause. With Dynamic Types there is a limited amount of testing you can do in this style, and in my experience you will not find some mistakes, or unexpected results until you are testing the final solution from vRA.

Test the workflows in vRO first to make sure you get the results you expect when you browse the inventory. One of my early attempts generated no errors in the workflows, however I ended up with an infinite loop of databaseFolder objects in the vRO inventory, with the expansion of each folder generating a new one.

Even once we had the workflows correctly running from vRO we experience numerous failed tests in vRA before we got the code just right. So test often and use version control when making changes to the code. If you can then integrate your vRO instance to a source control endpoint such as Git and commit your code with each version change to maintain an external copy. We nearly lost all our work when the lab environment we were working in crashed.

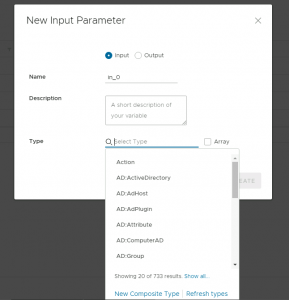

Populating the vRO Type fields

With those four workflows in place, and some additional workflows to control the REST API calls needed to look up information on our DMS server we have been able to create new Dynamic Types for DMS databases. There is one final action that needs to be taken, and again this one was discovered during troubleshooting and seems to be new in vRO 8, probably due to the HTML 5 client.

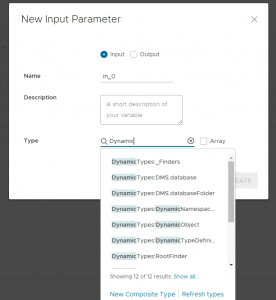

Although we have defined our Dynamic Types, tested the workflows, and successfully populated the vRO inventory we found that we were not able to select our Dynamic Types as the Type for input/output parameters and variables, they did not show up in the list of Types presented by vRO.

To resolve this, we need to use the Refresh types link that appears at the bottom of the Type drop down box in vRO when editing a parameter or variable Type.

A few seconds later the list will be updated and searching for Dynamic you will see your new Dynamic Type appear in the list of values that can be selected.

This is also a necessary step if you want to use your Dynamic Type as a Custom Resource within vRA, if you miss this step, you will not be able to create your Custom Resource as the Dynamic Type you defined is not recognised as a valid Type that can be used by vRA.

The Final Workflows

As Dean mentioned in the previous blog post we have made all of the content we have created across these two blog posts available for download with instructions on how to set them up in your own environment:

Regards

Katherine

thanks alot of information goodjobs

The “previous blog post” (https://xx.xxx.xxx.xxx/dms-tanzu-vra/) mentioned in this article isn’t reachable.

But you can reach that post here: https://veducate.co.uk/dms-tanzu-vra/

Thanks Brian for highlighting this issue, I’ve fixed this issue now.