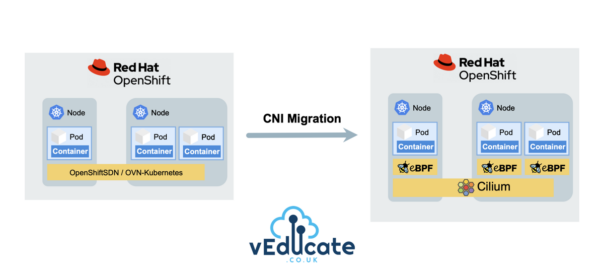

Recently, I’m seeing more and more queries about migrating to Cilium within an existing Red Hat OpenShift cluster, due to Cilium’s advanced networking capabilities, robust security features, and enhanced observability out-of-the-box. This increase of interest is also boosted by the fact that Cilium became the first Kubernetes CNI to graduate in the CNCF Landscape.

In this blog post, we’ll cover the step-by-step process of migrating from the traditional OpenShiftSDN (default CNI pre-4.12) or OVN-Kubernetes (default CNI from 4.12) to Cilium, exploring the advantages and considerations along the way.

If you need to understand more about the default CNI options in Red Hat OpenShift first, then I highly recommend this blog post, as pre-reading before going through this walkthrough.

Cilium Overview

For those of you who have not heard of Cilium, or maybe just the name and know there’s a buzz about it. In short Cilium, is a cloud native networking solution to provide security, networking and observability at a software level.

The reason why the buzz is so huge is due to being implemented using eBPF, a new way of interacting and programming with the kernel layer of the OS. This implementation opens a whole new world of options.

I’ll leave you with these two short videos from Thomas Graf, co-founder of Isovalent, the creators of Cilium.

Does Red Hat support this migration?

Cilium has achieved the Red Hat OpenShift Container Network Interface (CNI) certification by completing the operator certification and passing end-to-end testing. Red Hat will support Cilium installed and running in a Red Hat OpenShift cluster, and collaborate as needed with the ecosystem partner to troubleshoot any issues, as per their third-party software support statements. This would be a great reason to look at Isovalent Enterprise for Cilium, rather than using Cilium OSS, to get support from both vendors.

However, when it comes to performing a CNI migration for an active existing OpenShift cluster, Red Hat provides no guidance, unless it’s migrating from OpenShiftSDN to OVN-Kubernetes.

This means CNI migration to a third party CNI in an existing running Red Hat OpenShift Cluster is a grey area.

I’d recommend speaking to your Red Hat account team before performing any migration like this in your production environments. I have known large customers to take on this work/burden/supportability themselves and be successful.

Follow along with this video!

If you prefer watching a video or seeing things live and following along, like I do at times, then I’ve got you covered with the below video that covers the content from this blog post.

Pre-requisites and OpenShift Cluster configuration

As per the above, understand this process in detail, and if you follow it, you do so at your own risk.

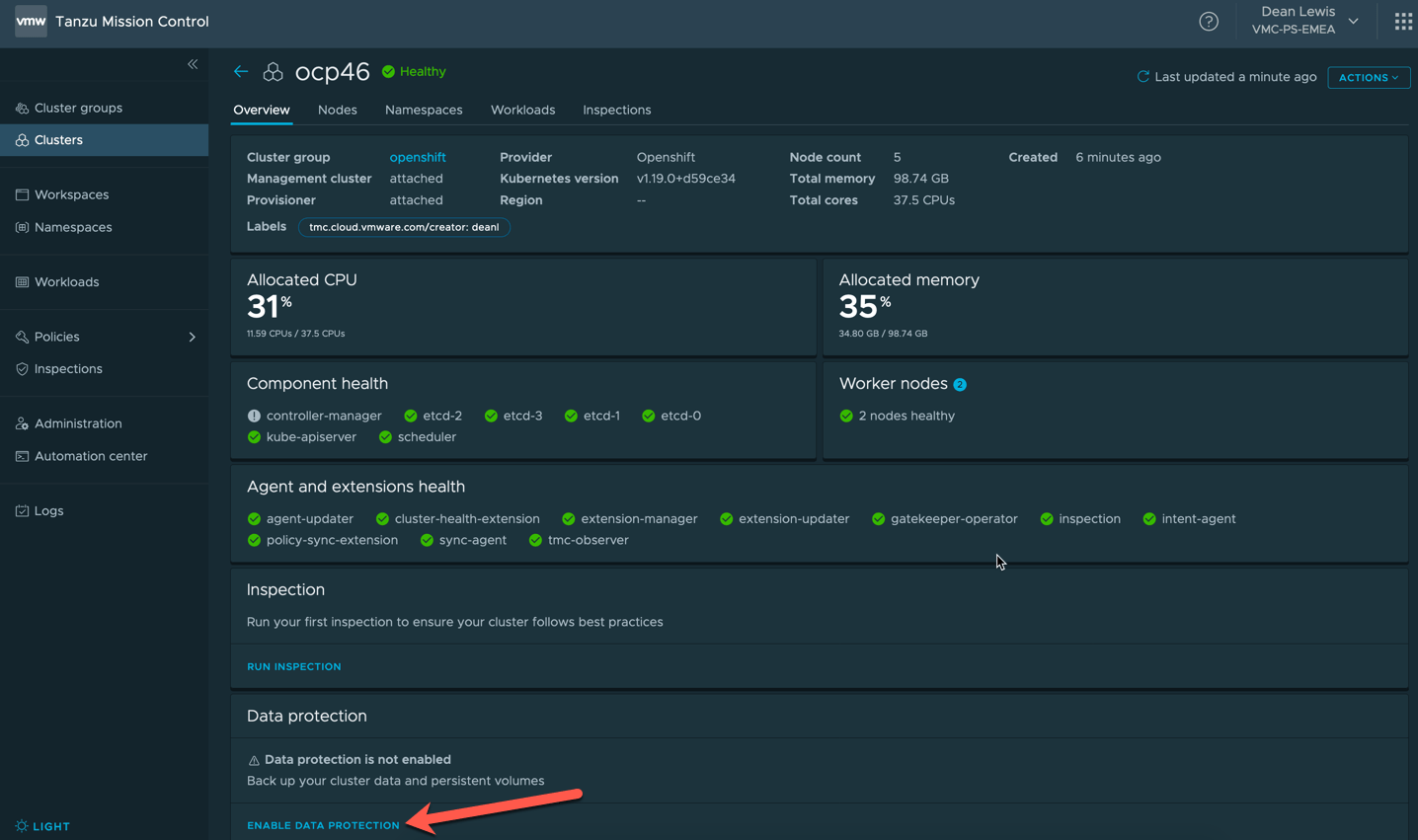

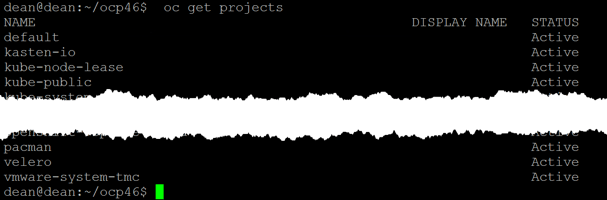

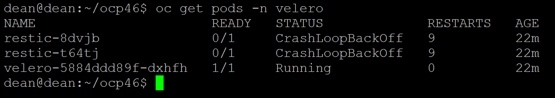

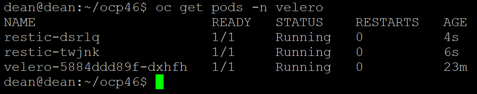

For this walkthrough, I’ve deployed a OpenShift 4.13 cluster with OVN-Kubernetes, with a sample application (see below). You can see these posts I’ve written for deployments of OpenShift, or follow the official documentation.

- 90DaysOfDevOps – Red Hat OpenShift – deep dive into features and installation

- Deploying OpenShift clusters (IPI) using vRA Code Stream

- How to specify your vSphere virtual machine resources when deploying Red Hat OpenShift

- How to deploy OpenShift 4.3 on VMware vSphere with Static IP addresses using Terraform

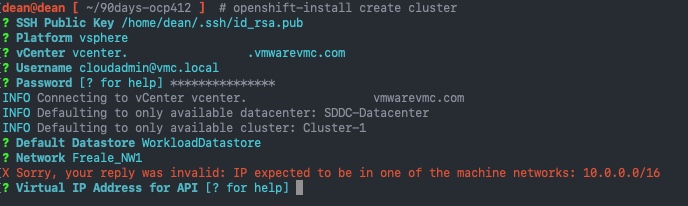

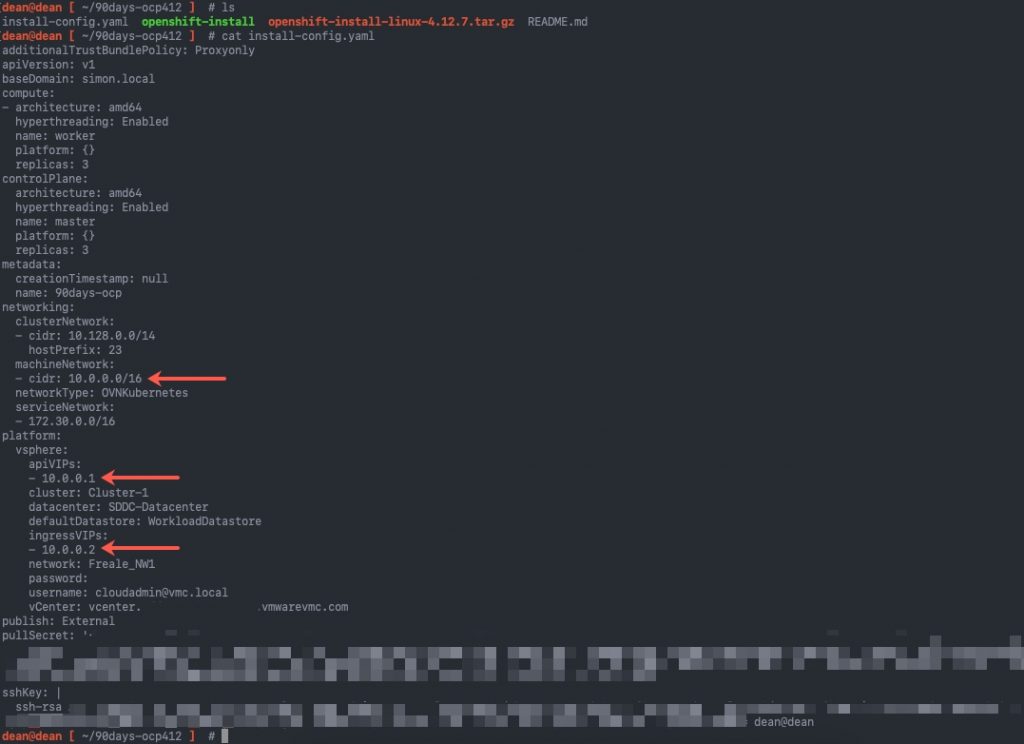

Here is a copy of my install-config.yaml file. It was generated using the openShift-install create install-config wizard. Then I ran the openshift-install create cluster command. Continue reading How to migrate from Red Hat OpenShiftSDN/OVN-Kubernetes to Cilium