As part of my virtual VMUG tour, I submitted a session to the VMUG call for papers covering the subject of Data Protection for Tanzu Kubernetes workloads. (Most of this will apply for any Kubernetes environments).

This was picked up by Erik at the Belgium VMUG for their UserCon in June 2021. After the session the videos remain available on demand for a short time, but there were no plans to upload this for everyone. So thank you to Michael Cade, whom offered to host this session for all on the Cloud Native Data Management – YouTube Channel.

In the below session I cover the following areas;

- What kind of data protection do you need?

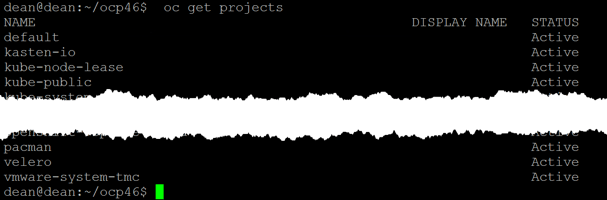

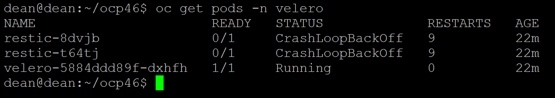

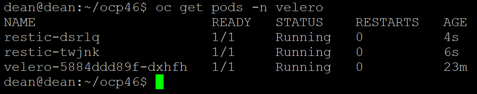

- Velero

- The open source data protection project from VMware

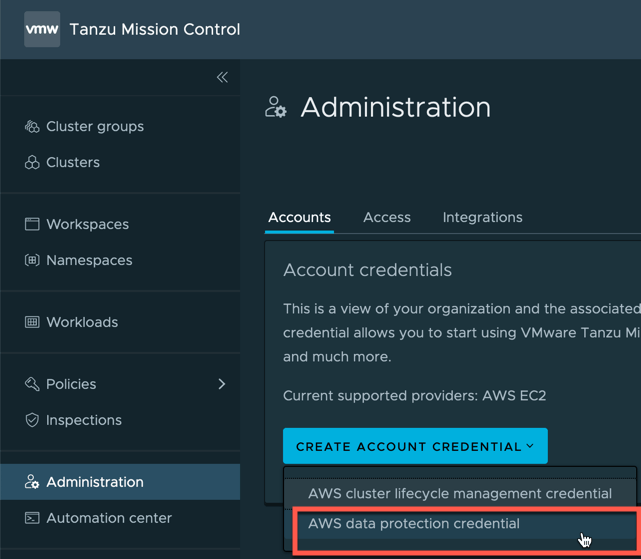

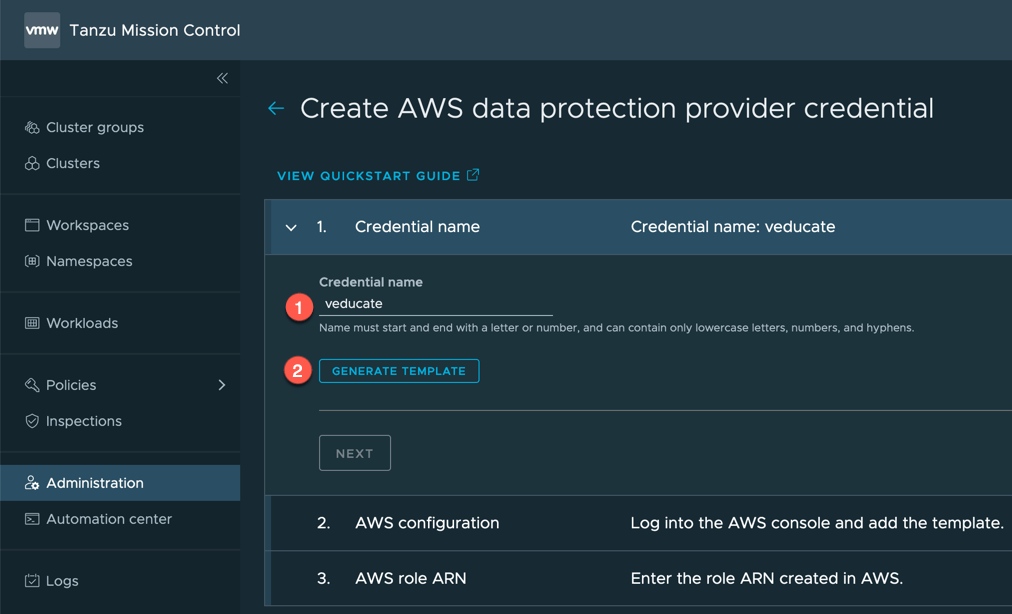

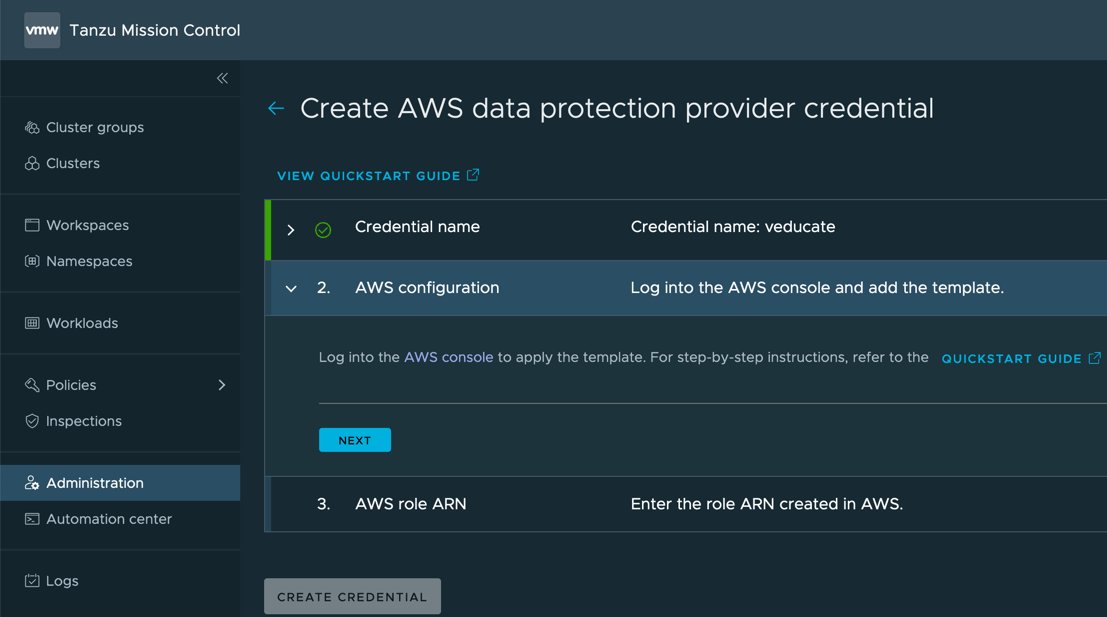

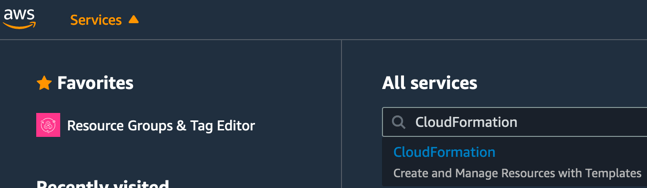

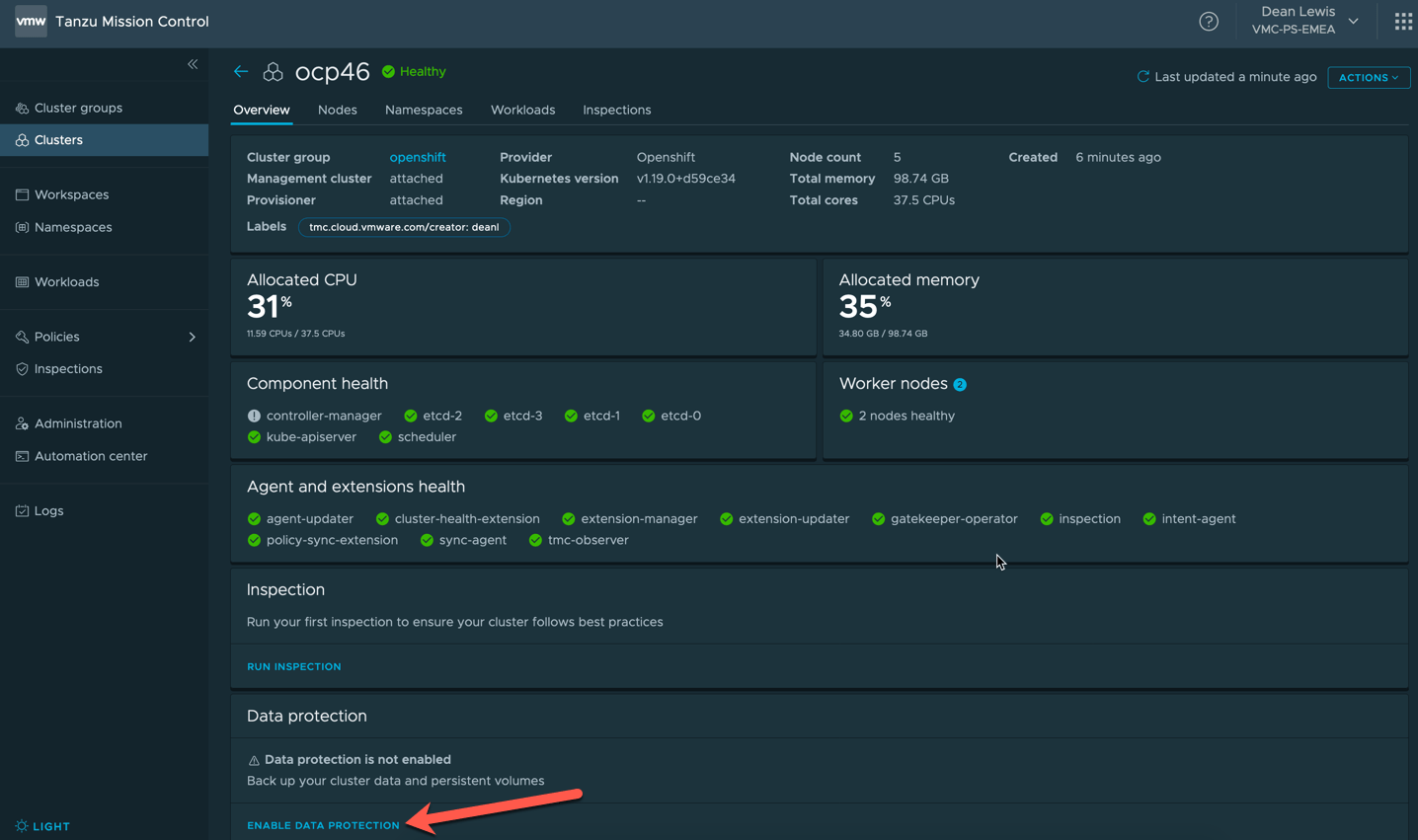

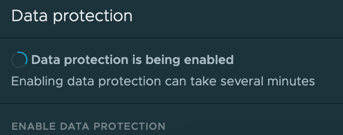

- Tanzu Mission Control

- The Kubernetes fleet management platform that utilizes Velero from VMware.

- 3rd Party Options

- A nod to the 3rd party ecosystem that offer enterprise Data Protection and Management software such as;

- Kasten

- PortWorx

- A nod to the 3rd party ecosystem that offer enterprise Data Protection and Management software such as;

There is even a quick technical demo in there, with a little technical hiccup I had to style out!

Regards