The Issue

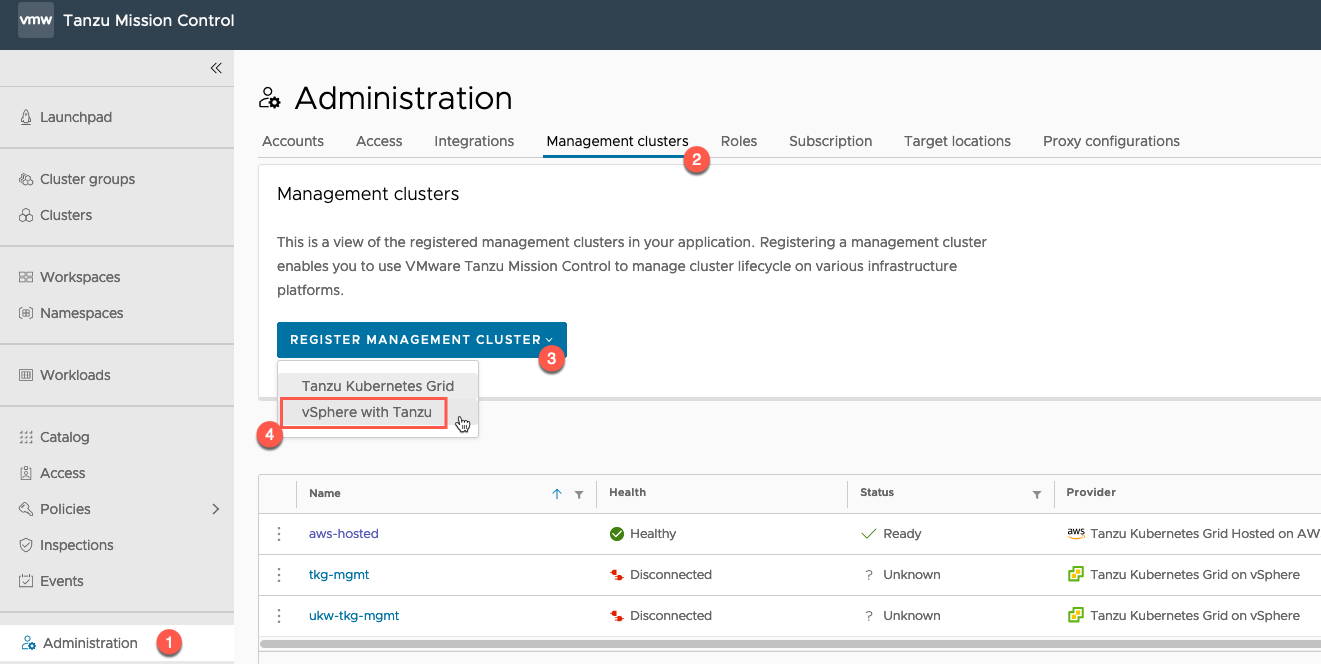

When deploying a brand new Tanzu Kubernete Grid Management Cluster to a vSphere environment we kept hitting failures like the below. The deployment was very vanilla with the default settings, no extra metadata inputted into the build.

!! [1223 15:26:17.84239]: init.go:732] Failure while deploying management cluster, Here are some steps to investigate the cause:

!! [1223 15:26:17.84256]: init.go:733] Debug:

!! [1223 15:26:17.84262]: init.go:734] kubectl get po,deploy,cluster,kubeadmcontrolplane,machine,machinedeployment -A --kubeconfig /home/michael/.kube-tkg/tmp/config_Qd01VhPd

!! [1223 15:26:17.84272]: init.go:735] kubectl logs deployment.apps/ -n manager --kubeconfig /home/michael/.kube-tkg/tmp/config_Qd01VhPd

!! [1223 15:26:17.84278]: init.go:738] To clean up the resources created by the management cluster:

!! [1223 15:26:17.84283]: init.go:739] tanzu management-cluster delete

✘ [1223 15:26:17.84291]: init.go:91] unable to set up management cluster, : unable to patch cluster object: unable to patch optional metadata under labels: unable to patch the management cluster object with optional metadata: unable to patch the cluster object: error while applying patch for "&TypeMeta{Kind:,APIVersion:,}" tkg-system/tkg-mgmt-vsphere-20221223151757: Cluster.cluster.x-k8s.io "tkg-mgmt-vsphere-20221223151757" is invalid: [metadata.labels: Invalid value: "": name part must be non-empty, metadata.labels: Invalid value: "": name part must consist of alphanumeric characters, '-', '_' or '.', and must start and end with an alphanumeric character (e.g. 'MyName', or 'my.name', or '123-abc', regex used for validation is '([A-Za-z0-9][-A-Za-z0-9_.]*)?[A-Za-z0-9]')]

The Cause

The tooling creates an erronous value in the cluster config file, which causes the build error.

The Fix

Search for the latest yaml file created in:

~/.config/tanzu/tkg/clusterconfigs/

and comment out the following line:

CLUSTER_LABELS: :, # The line will now look like this: #CLUSTER_LABELS: :,

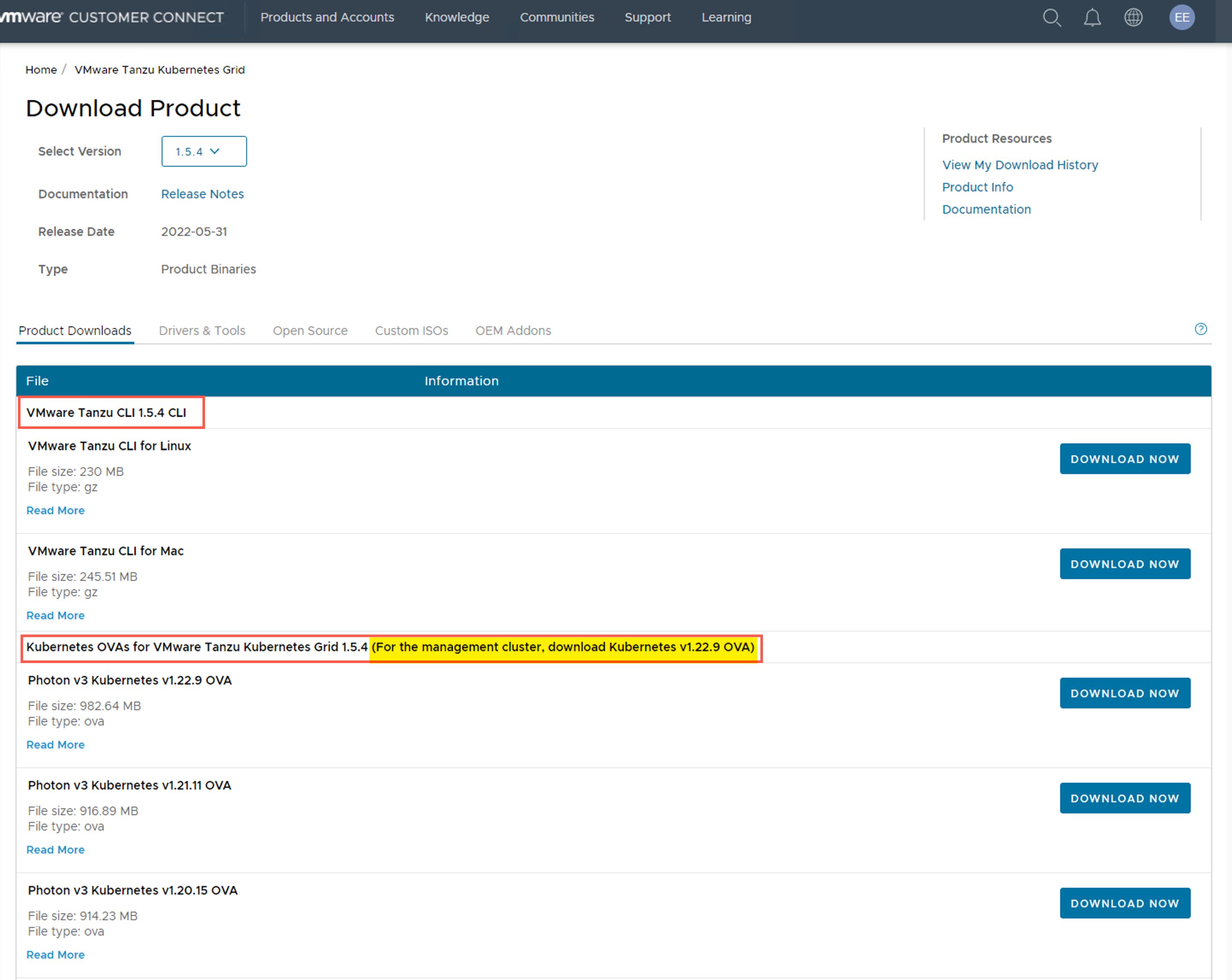

Now re-run the creation of your cluster using the CLI

tanzu mc create --file {file_name.yaml}

Regards